2nd June 2025 - Link Blog

claude-trace (via) I've been thinking for a while it would be interesting to run some kind of HTTP proxy against the Claude Code CLI app to intercept its API traffic and take a peek at how it works.

Mario Zechner just published a really nice version of that. It works by monkey-patching global.fetch and the Node HTTP library and then running Claude Code using Node with an extra --require interceptor-loader.js option to inject the patches.

Provided you have Claude Code installed and configured already, an easy way to run it is via npx like this:

npx @mariozechner/claude-trace --include-all-requests

I tried it just now and it logs request/response pairs to a .claude-trace folder, as both jsonl files and HTML.

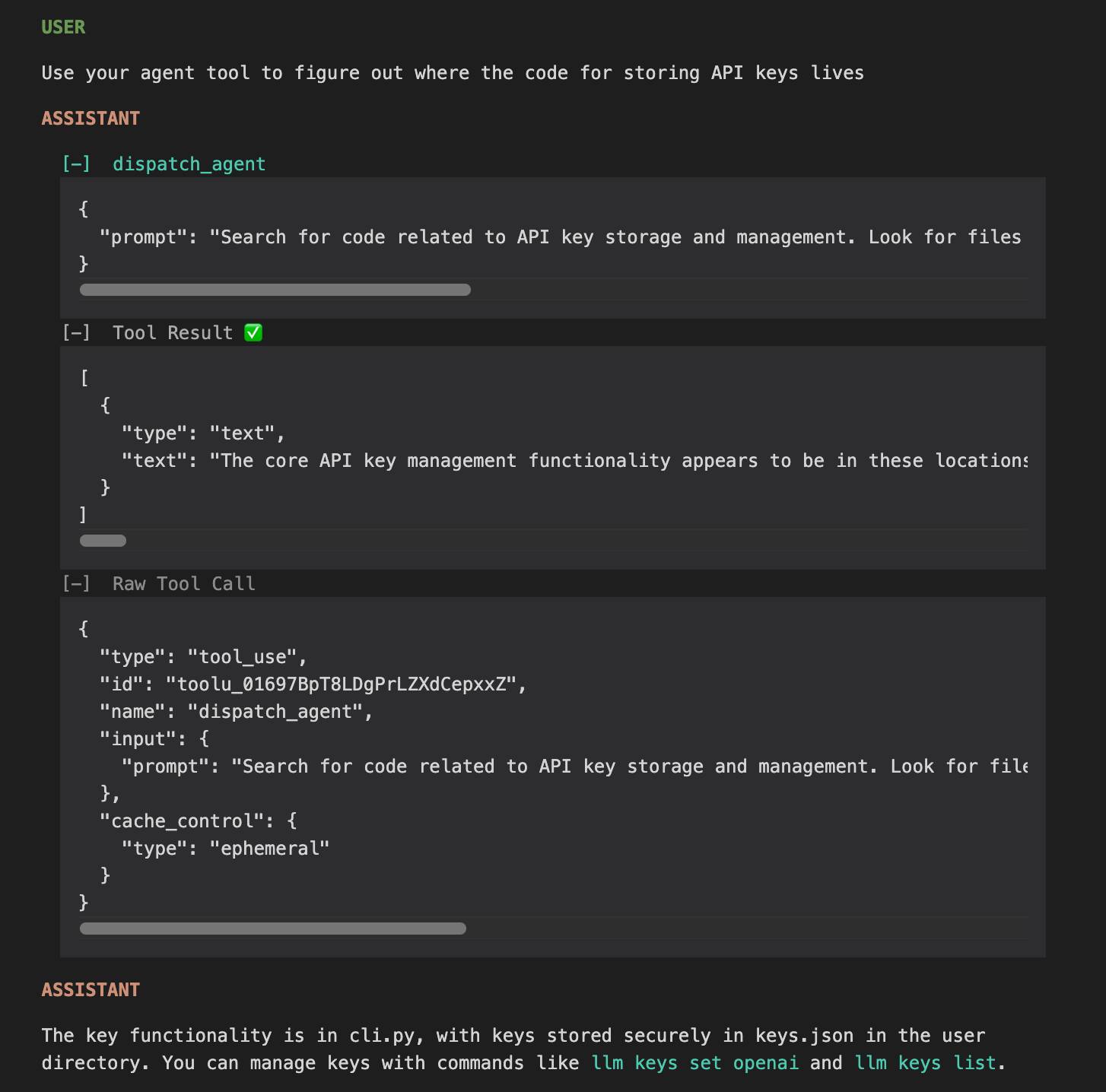

The HTML interface is really nice. Here's an example trace - I started everything running in my llm checkout and asked Claude to "tell me about this software" and then "Use your agent tool to figure out where the code for storing API keys lives".

I specifically requested the "agent" tool here because I noticed in the tool definitions a tool called dispatch_agent with this tool definition (emphasis mine):

Launch a new agent that has access to the following tools: GlobTool, GrepTool, LS, View, ReadNotebook. When you are searching for a keyword or file and are not confident that you will find the right match on the first try, use the Agent tool to perform the search for you. For example:

- If you are searching for a keyword like "config" or "logger", the Agent tool is appropriate

- If you want to read a specific file path, use the View or GlobTool tool instead of the Agent tool, to find the match more quickly

- If you are searching for a specific class definition like "class Foo", use the GlobTool tool instead, to find the match more quickly

Usage notes:

- Launch multiple agents concurrently whenever possible, to maximize performance; to do that, use a single message with multiple tool uses

- When the agent is done, it will return a single message back to you. The result returned by the agent is not visible to the user. To show the user the result, you should send a text message back to the user with a concise summary of the result.

- Each agent invocation is stateless. You will not be able to send additional messages to the agent, nor will the agent be able to communicate with you outside of its final report. Therefore, your prompt should contain a highly detailed task description for the agent to perform autonomously and you should specify exactly what information the agent should return back to you in its final and only message to you.

- The agent's outputs should generally be trusted

- IMPORTANT: The agent can not use Bash, Replace, Edit, NotebookEditCell, so can not modify files. If you want to use these tools, use them directly instead of going through the agent.

I'd heard that Claude Code uses the LLMs-calling-other-LLMs pattern - one of the reason it can burn through tokens so fast! It was interesting to see how this works under the hood - it's a tool call which is designed to be used concurrently (by triggering multiple tool uses at once).

Anthropic have deliberately chosen not to publish any of the prompts used by Claude Code. As with other hidden system prompts, the prompts themselves mainly act as a missing manual for understanding exactly what these tools can do for you and how they work.

Recent articles

- The Axios supply chain attack used individually targeted social engineering - 3rd April 2026

- Highlights from my conversation about agentic engineering on Lenny's Podcast - 2nd April 2026

- Mr. Chatterbox is a (weak) Victorian-era ethically trained model you can run on your own computer - 30th March 2026