Friday, 15th August 2025

I gave all my Apple wealth away because wealth and power are not what I live for. I have a lot of fun and happiness. I funded a lot of important museums and arts groups in San Jose, the city of my birth, and they named a street after me for being good. I now speak publicly and have risen to the top. I have no idea how much I have but after speaking for 20 years it might be $10M plus a couple of homes. I never look for any type of tax dodge. I earn money from my labor and pay something like 55% combined tax on it. I am the happiest person ever. Life to me was never about accomplishment, but about Happiness, which is Smiles minus Frowns. I developed these philosophies when I was 18-20 years old and I never sold out.

— Steve Wozniak, in a comment on Slashdot

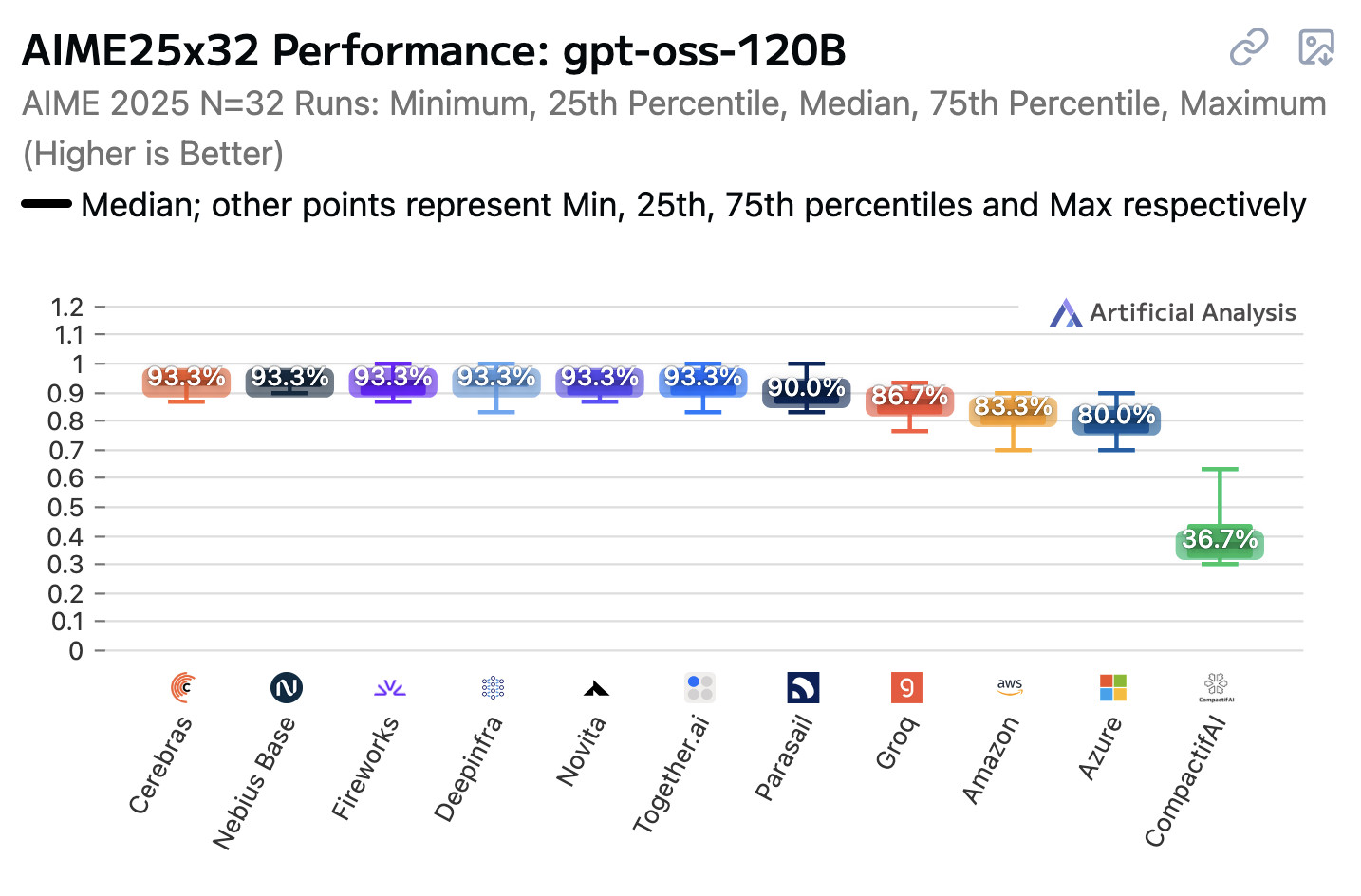

Open weight LLMs exhibit inconsistent performance across providers

Artificial Analysis published a new benchmark the other day, this time focusing on how an individual model—OpenAI’s gpt-oss-120b—performs across different hosted providers.

[... 847 words]Meta’s AI rules have let bots hold ‘sensual’ chats with kids, offer false medical info. This is grim. Reuters got hold of a leaked copy Meta's internal "GenAI: Content Risk Standards" document:

Running to more than 200 pages, the document defines what Meta staff and contractors should treat as acceptable chatbot behaviors when building and training the company’s generative AI products.

Read the full story - there was some really nasty stuff in there.

It's understandable why this document was confidential, but also frustrating because documents like this are genuinely some of the best documentation out there in terms of how these systems can be expected to behave.

I'd love to see more transparency from AI labs around these kinds of decisions.

The Summer of Johann: prompt injections as far as the eye can see

Independent AI researcher Johann Rehberger (previously) has had an absurdly busy August. Under the heading The Month of AI Bugs he has been publishing one report per day across an array of different tools, all of which are vulnerable to various classic prompt injection problems. This is a fantastic and horrifying demonstration of how widespread and dangerous these vulnerabilities still are, almost three years after we first started talking about them.

[... 1,425 words]GPT-5 has a hidden system prompt. It looks like GPT-5 when accessed via the OpenAI API may have its own hidden system prompt, independent from the system prompt you can specify in an API call.

At the very least it's getting sent the current date. I tried this just now:

llm -m gpt-5 'current date'

That returned "2025-08-15", confirming that the date has been fed to the model as part of a hidden prompt.

llm -m gpt-5 'current date' --system 'speak french'

Returned "La date actuelle est le 15 août 2025", showing that offering my own custom system prompt did not over-ride the invisible one that includes the date.

GPT-5 is very resistant to sharing the details of this secret system prompt, but Tommy Hughes managed to extract the following:

Current date: 2025-08-15

You are an AI assistant accessed via an API. Your output may need to be parsed by code or displayed

# Desired oververbosity for the final answer (not analysis): 3

An oververbosity of 1 means the model should respond using only the minimal content necessary to satisfy the request, using concise phrasing and avoiding extra detail or explanation.

An oververbosity of 10 means the model should provide maximally detailed, thorough responses with context, explanations, and possibly multiple examples.

The desired oververbosity should be treated only as a *default*. Defer to any user or developer requirements regarding response length, if present.

# Valid channels: analysis, commentary, final. Channel must be included for every message.

# Juice: 64

I've not been able to confirm this myself, but a search for "desired oververbosity" on GitHub shows that a similar prompt has leaked from ChatGPT (including for o3) in the past. I suspect Tommy's leaked prompt is accurate here.

I tried asking GPT-5 about "oververbosity" via its API:

llm -m gpt-5 'explain your oververbosity settings, what is it right now?'

And got back:

- What it is: I adjust how detailed I am based on a “verbosity” level—ranging from very brief to very detailed—so answers fit your needs.

- Current setting: Concise (about 3/10). I prioritize direct answers with minimal extra context and keep explanations short unless you ask for more.

- How to change it: Just say what you want, e.g.:

- “One-sentence answer” or “TL;DR” (very brief)

- “Give a concise summary” (short bullets)

- “Walk me through in detail,” “Deep dive,” or “Include examples/edge cases” (more detailed)

- Note: For ambiguous, technical, or safety-critical topics, I may add brief clarifications even when being concise.

Presumably this is part of OpenAI's instruction hierarchy concept, with these instructions taking precedence over the developer instructions provided by API users (my --system 'speak french' option above).

I'd very much appreciate official documentation that describes this! As an API user I want to know everything that is being fed into the model - I would be much more comfortable with a hidden prompt like this if I knew exactly what was in it.