Wednesday, 21st May 2025

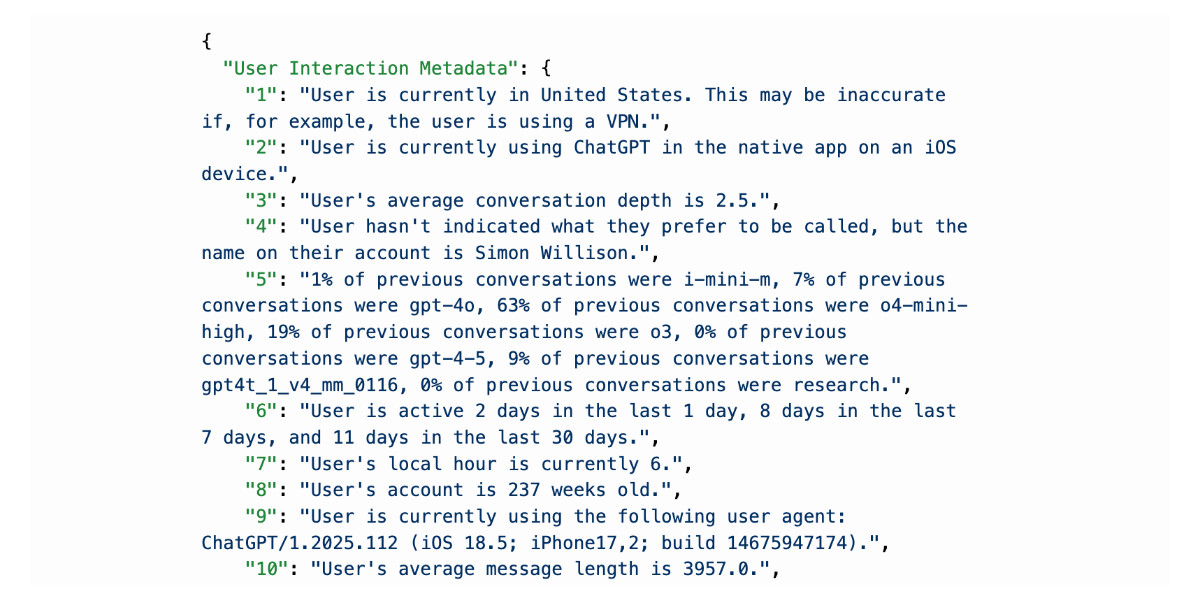

I really don’t like ChatGPT’s new memory dossier

Last month ChatGPT got a major upgrade. As far as I can tell the closest to an official announcement was this tweet from @OpenAI:

[... 2,535 words]Chicago Sun-Times Prints AI-Generated Summer Reading List With Books That Don’t Exist. Classic slop: it listed real authors with entirely fake books.

There's an important follow-up from 404 Media in their subsequent story:

Victor Lim, the vice president of marketing and communications at Chicago Public Media, which owns the Chicago Sun-Times, told 404 Media in a phone call that the Heat Index section was licensed from a company called King Features, which is owned by the magazine giant Hearst. He said that no one at Chicago Public Media reviewed the section and that historically it has not reviewed newspaper inserts that it has bought from King Features.

“Historically, we don’t have editorial review from those mainly because it’s coming from a newspaper publisher, so we falsely made the assumption there would be an editorial process for this,” Lim said. “We are updating our policy to require internal editorial oversight over content like this.”

Gemini Diffusion. Another of the announcements from Google I/O yesterday was Gemini Diffusion, Google's first LLM to use diffusion (similar to image models like Imagen and Stable Diffusion) in place of transformers.

Google describe it like this:

Traditional autoregressive language models generate text one word – or token – at a time. This sequential process can be slow, and limit the quality and coherence of the output.

Diffusion models work differently. Instead of predicting text directly, they learn to generate outputs by refining noise, step-by-step. This means they can iterate on a solution very quickly and error correct during the generation process. This helps them excel at tasks like editing, including in the context of math and code.

The key feature then is speed. I made it through the waitlist and tried it out just now and wow, they are not kidding about it being fast.

In this video I prompt it with "Build a simulated chat app" and it responds at 857 tokens/second, resulting in an interactive HTML+JavaScript page (embedded in the chat tool, Claude Artifacts style) within single digit seconds.

The performance feels similar to the Cerebras Coder tool, which used Cerebras to run Llama3.1-70b at around 2,000 tokens/second.

How good is the model? I've not seen any independent benchmarks yet, but Google's landing page for it promises "the performance of Gemini 2.0 Flash-Lite at 5x the speed" so presumably they think it's comparable to Gemini 2.0 Flash-Lite, one of their least expensive models.

Prior to this the only commercial grade diffusion model I've encountered is Inception Mercury back in February this year.

Update: a correction from synapsomorphy on Hacker News:

Diffusion isn't in place of transformers, it's in place of autoregression. Prior diffusion LLMs like Mercury still use a transformer, but there's no causal masking, so the entire input is processed all at once and the output generation is obviously different. I very strongly suspect this is also using a transformer.

nvtop provided this explanation:

Despite the name, diffusion LMs have little to do with image diffusion and are much closer to BERT and old good masked language modeling. Recall how BERT is trained:

- Take a full sentence ("the cat sat on the mat")

- Replace 15% of tokens with a [MASK] token ("the cat [MASK] on [MASK] mat")

- Make the Transformer predict tokens at masked positions. It does it in parallel, via a single inference step.

Now, diffusion LMs take this idea further. BERT can recover 15% of masked tokens ("noise"), but why stop here. Let's train a model to recover texts with 30%, 50%, 90%, 100% of masked tokens.

Once you've trained that, in order to generate something from scratch, you start by feeding the model all [MASK]s. It will generate you mostly gibberish, but you can take some tokens (let's say, 10%) at random positions and assume that these tokens are generated ("final"). Next, you run another iteration of inference, this time input having 90% of masks and 10% of "final" tokens. Again, you mark 10% of new tokens as final. Continue, and in 10 steps you'll have generated a whole sequence. This is a core idea behind diffusion language models. [...]

Devstral. New Apache 2.0 licensed LLM release from Mistral, this time specifically trained for code.

Devstral achieves a score of 46.8% on SWE-Bench Verified, outperforming prior open-source SoTA models by more than 6% points. When evaluated under the same test scaffold (OpenHands, provided by All Hands AI 🙌), Devstral exceeds far larger models such as Deepseek-V3-0324 (671B) and Qwen3 232B-A22B.

I'm always suspicious of small models like this that claim great benchmarks against much larger rivals, but there's a Devstral model that is just 14GB on Ollama to it's quite easy to try out for yourself.

I fetched it like this:

ollama pull devstral

Then ran it in a llm chat session with llm-ollama like this:

llm install llm-ollama

llm chat -m devstral

Initial impressions: I think this one is pretty good! Here's a full transcript where I had it write Python code to fetch a CSV file from a URL and import it into a SQLite database, creating the table with the necessary columns. Honestly I need to retire that challenge, it's been a while since a model failed at it, but it's still interesting to see how it handles follow-up prompts to demand things like asyncio or a different HTTP client library.

It's also available through Mistral's API. llm-mistral 0.13 configures the devstral-small alias for it:

llm install -U llm-mistral

llm keys set mistral

# paste key here

llm -m devstral-small 'HTML+JS for a large text countdown app from 5m'