1,482 posts tagged “datasette”

Datasette is an open source tool for exploring and publishing data.

2025

Claude can write complete Datasette plugins now

This isn’t necessarily surprising, but it’s worth noting anyway. Claude Sonnet 4.5 is capable of building a full Datasette plugin now.

[... 1,296 words]Two of my public Datasette instances - for my TILs and my blog's backup mirror - were getting hammered with misbehaving bot traffic today. Scaling them up to more Fly instances got them running again but I'd rather not pay extra just so bots can crawl me harder.

The log files showed the main problem was facets: Datasette provides these by default on the table page, but they can be combined in ways that keep poorly written crawlers busy visiting different variants of the same page over and over again.

So I turned those off. I'm now running those instances with --setting allow_facet off (described here), and my logs are full of lines that look like this. The "400 Bad Request" means a bot was blocked from loading the page:

GET /simonwillisonblog/blog_entry?_facet_date=created&_facet=series_id&_facet_size=max&_facet=extra_head_html&_sort=is_draft&created__date=2012-01-30 HTTP/1.1" 400 Bad Request

llm-openrouter 0.5. New release of my LLM plugin for accessing models made available via OpenRouter. The release notes in full:

- Support for tool calling. Thanks, James Sanford. #43

- Support for reasoning options, for example

llm -m openrouter/openai/gpt-5 'prove dogs exist' -o reasoning_effort medium. #45

Tool calling is a really big deal, as it means you can now use the plugin to try out tools (and build agents, if you like) against any of the 179 tool-enabled models on that platform:

llm install llm-openrouter

llm keys set openrouter

# Paste key here

llm models --tools | grep 'OpenRouter:' | wc -l

# Outputs 179

Quite a few of the models hosted on OpenRouter can be accessed for free. Here's a tool-usage example using the llm-tools-datasette plugin against the new Grok 4 Fast model:

llm install llm-tools-datasette

llm -m openrouter/x-ai/grok-4-fast:free -T 'Datasette("https://datasette.io/content")' 'Count available plugins'

Outputs:

There are 154 available plugins.

The output of llm logs -cu shows the tool calls and SQL queries it executed to get that result.

LLM 0.27, the annotated release notes: GPT-5 and improved tool calling

I shipped LLM 0.27 today (followed by a 0.27.1 with minor bug fixes), adding support for the new GPT-5 family of models from OpenAI plus a flurry of improvements to the tool calling features introduced in LLM 0.26. Here are the annotated release notes.

[... 1,174 words]We're hosting the sixth in our series of Datasette Public Office Hours livestream sessions this Friday, 6th of June at 2pm PST (here's that time in your location).

The topic is going to be tool support in LLM, as introduced here.

I'll be walking through the new features, and we're also inviting five minute lightning demos from community members who are doing fun things with the new capabilities. If you'd like to present one of those please get in touch via this form.

Here's a link to add it to Google Calendar.

Saying Bye to Glitch (via) Pirijan, co-creator of Glitch - who stopped working on it six years ago, so has the benefit of distance:

Here lies Glitch, a place on the web you could go to write up a website or a node.js server that would be hosted and updated as you type. 🥀 RIP 2015 – 2025.

Pirijan continues with a poignant retrospective about Glitch's early origins at Fog Greek with the vision of providing "web development with real code that was as easy as editing a Google Doc". Their conclusion:

I still believe there’s a market for easy and fun web development and hosting, but a product like this needs power-users and enthusiasts willing to pay for it. To build any kind of prosumer software, you do have to be an optimist and believe that enough of the world still cares about quality and craft.

Glitch will be shutting down project hosting and user profiles on July 8th.

Code will be available to download until the end of the year. Glitch have an official Python export script that can download all of your projects and assets.

Jenn Schiffer, formerly Director of Community at Glitch and then Fastly, is a little more salty:

all that being said, i do sincerely want to thank fastly for giving glitch the opportunity to live to its 3-year acqui-versary this week. they generously took in a beautiful flower and placed it upon their sunny window sill with hopes to grow it more. the problem is they chose to never water it, and anyone with an elementary school education know what happens then. i wish us all a merry august earnings call season.

I'm very sad to see Glitch go. I've been pointing people to my tutorial on Running Datasette on Glitch for 5 years now, it was a fantastic way to help people quickly get started hosting their own projects.

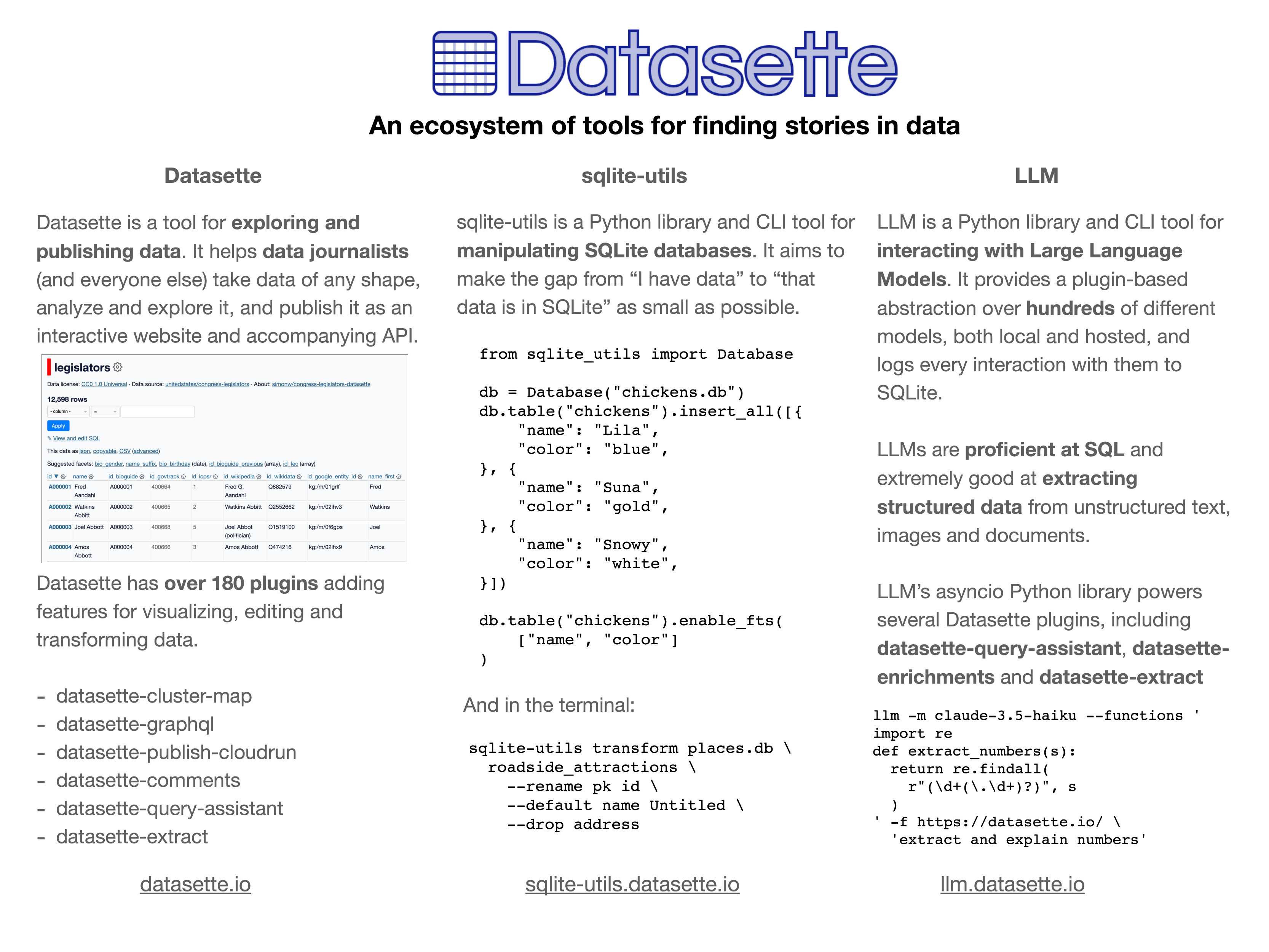

In addition to my workshop the other day I'm also participating in the poster session at PyCon US this year.

This means that tomorrow (Sunday 18th May) I'll be hanging out next to my poster from 10am to 1pm in Hall A talking to people about my various projects.

I'll confess: I didn't pay close enough attention to the poster information, so when I first put my poster up it looked a little small:

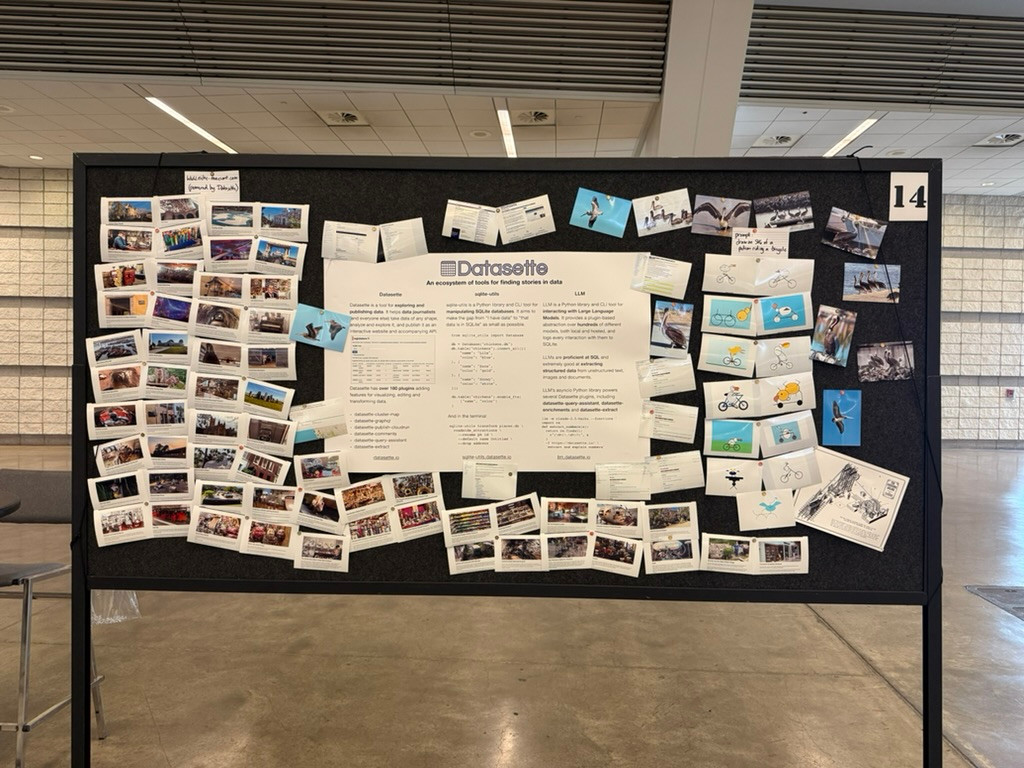

... so I headed to the nearest CVS and printed out some photos to better represent my interests and personality. I'm going for a "teenage bedroom" aesthetic here, I'm very happy with the result:

Here's the poster in the middle (also available as a PDF). It has columns for Datasette, sqlite-utils and LLM.

If you're at PyCon I'd love to talk to you about things I'm working on!

Update: Thanks to everyone who came along. Here's a 6MB photo of the poster setup. The museums were all from my www.niche-museums.com site and the pelicans riding a bicycle SVGs came from my pelican-riding-a-bicycle tag.

django-simple-deploy. Eric Matthes presented a lightning talk about this project at PyCon US this morning. "Django has a deploy command now". You can run it like this:

pip install django-simple-deploy[fly_io]

# Add django_simple_deploy to INSTALLED_APPS.

python manage.py deploy --automate-all

It's plugin-based (inspired by Datasette!) and the project has stable plugins for three hosting platforms: dsd-flyio, dsd-heroku and dsd-platformsh.

Currently in development: dsd-vps - a plugin that should work with any VPS provider, using Paramiko to connect to a newly created instance and run all of the commands needed to start serving a Django application.

Introducing Datasette for Newsrooms. We're introducing a new product suite today called Datasette for Newsrooms - a bundled collection of Datasette Cloud features built specifically for investigative journalists and data teams. We're describing it as an all-in-one data store, search engine, and collaboration platform designed to make working with data in a newsroom easier, faster, and more transparent.

If your newsroom could benefit from a managed version of Datasette we would love to hear from you. We're offering it to nonprofit newsrooms for free for the first year (they can pay us in feedback), and we have a two month trial for everyone else.

Get in touch at hello@datasette.cloud if you'd like to try it out.

One crucial detail: we will help you get started - we'll load data into your instance for you (you get some free data engineering!) and walk you through how to use it, and we will eagerly consume any feedback you have for us and prioritize shipping anything that helps you use the tool. Our unofficial goal: we want someone to win a Pulitzer for investigative reporting where our tool played a tiny part in their reporting process.

Here's an animated GIF demo (taken from our new Newsrooms landing page) of my favorite recent feature: the ability to extract structured data into a table starting with an unstructured PDF, using the latest version of the datasette-extract plugin.

The single most impactful investment I’ve seen AI teams make isn’t a fancy evaluation dashboard—it’s building a customized interface that lets anyone examine what their AI is actually doing. I emphasize customized because every domain has unique needs that off-the-shelf tools rarely address. When reviewing apartment leasing conversations, you need to see the full chat history and scheduling context. For real-estate queries, you need the property details and source documents right there. Even small UX decisions—like where to place metadata or which filters to expose—can make the difference between a tool people actually use and one they avoid. [...]

Teams with thoughtfully designed data viewers iterate 10x faster than those without them. And here’s the thing: These tools can be built in hours using AI-assisted development (like Cursor or Loveable). The investment is minimal compared to the returns.

— Hamel Husain, A Field Guide to Rapidly Improving AI Products