November 2023

85 posts: 8 entries, 30 links, 15 quotes, 32 beats

Nov. 22, 2023

Nov. 23, 2023

YouTube: Intro to Large Language Models. Andrej Karpathy is an outstanding educator, and this one hour video offers an excellent technical introduction to LLMs.

At 42m Andrej expands on his idea of LLMs as the center of a new style of operating system, tying together tools and and a filesystem and multimodal I/O.

There’s a comprehensive section on LLM security—jailbreaking, prompt injection, data poisoning—at the 45m mark.

I also appreciated his note on how parameter size maps to file size: Llama 70B is 140GB, because each of those 70 billion parameters is a 2 byte 16bit floating point number on disk.

The 6 Types of Conversations with Generative AI. I’ve hoping to see more user research on how users interact with LLMs for a while. Here’s a study from Nielsen Norman Group, who conducted a 2-week diary study involving 18 participants, then interviewed 14 of them.

They identified six categories of conversation, and made some resulting design recommendations.

A key observation is that “search style” queries (just a few keywords) often indicate users who are new to LLMs, and should be identified as a sign that the user needs more inline education on how to best harness the tool.

Suggested follow-up prompts are valuable for most of the types of conversation identified.

To some degree, the whole point of the tech industry’s embrace of “ethics” and “safety” is about reassurance. Companies realize that the technologies they are selling can be disconcerting and disruptive; they want to reassure the public that they’re doing their best to protect consumers and society. At the end of the day, though, we now know there’s no reason to believe that those efforts will ever make a difference if the company’s “ethics” end up conflicting with its money. And when have those two things ever not conflicted?

Nov. 24, 2023

Nov. 25, 2023

I’m on the Newsroom Robots podcast, with thoughts on the OpenAI board

Newsroom Robots is a weekly podcast exploring the intersection of AI and journalism, hosted by Nikita Roy.

[... 1,032 words]Nov. 26, 2023

This is nonsensical. There is no way to understand the LLaMA models themselves as a recasting or adaptation of any of the plaintiffs’ books.

Nov. 27, 2023

Prompt injection explained, November 2023 edition

A neat thing about podcast appearances is that, thanks to Whisper transcriptions, I can often repurpose parts of them as written content for my blog.

[... 1,357 words]MonadGPT (via) “What would have happened if ChatGPT was invented in the 17th century? MonadGPT is a possible answer.

MonadGPT is a finetune of Mistral-Hermes 2 on 11,000 early modern texts in English, French and Latin, mostly coming from EEBO and Gallica.

Like the original Mistral-Hermes, MonadGPT can be used in conversation mode. It will not only answer in an historical language and style but will use historical and dated references.”

Nov. 28, 2023

Nov. 29, 2023

Announcing Deno Cron. Scheduling tasks in deployed applications is surprisingly difficult. Deno clearly understand this, and they’ve added a new Deno.cron(name, cron_definition, callback) mechanism for running a JavaScript function every X minutes/hours/etc.

As with several other recent Deno features, there are two versions of the implementation. The first is an in-memory implementation in the Deno open source binary, while the second is a much more robust closed-source implementation that runs in Deno Deploy:

“When a new production deployment of your project is created, an ephemeral V8 isolate is used to evaluate your project’s top-level scope and to discover any Deno.cron definitions. A global cron scheduler is then updated with your project’s latest cron definitions, which includes updates to your existing crons, new crons, and deleted crons.”

Two interesting features: unlike regular cron the Deno version prevents cron tasks that take too long from ever overlapping each other, and a backoffSchedule: [1000, 5000, 10000] option can be used to schedule attempts to re-run functions if they raise an exception.

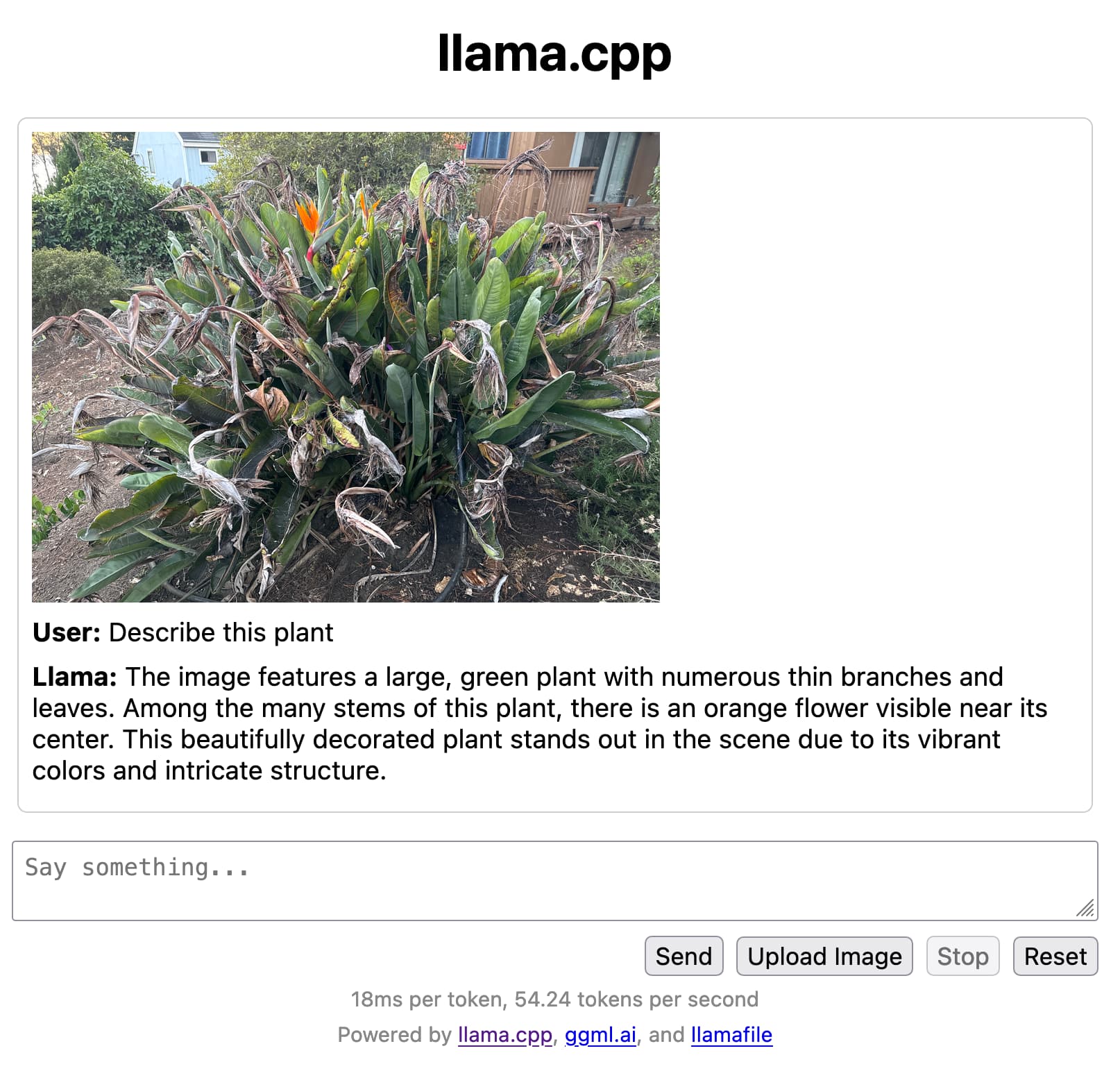

llamafile is the new best way to run an LLM on your own computer

Mozilla’s innovation group and Justine Tunney just released llamafile, and I think it’s now the single best way to get started running Large Language Models (think your own local copy of ChatGPT) on your own computer.

[... 650 words]Nov. 30, 2023

ChatGPT is one year old. Here’s how it changed the world. I’m quoted in this piece by Benj Edwards about ChatGPT’s one year birthday:

“Imagine if every human being could automate the tedious, repetitive information tasks in their lives, without needing to first get a computer science degree,” AI researcher Simon Willison told Ars in an interview about ChatGPT’s impact. “I’m seeing glimpses that LLMs might help make a huge step in that direction.”

This is what I constantly tell my students: The hard part about doing a tech product for the most part isn't the what beginners think makes tech hard — the hard part is wrangling systemic complexity in a good, sustainable and reliable way.

Many non-tech people e.g. look at programmers and think the hard part is knowing what this garble of weird text means. But this is the easy part. And if you are a person who would think it is hard, you probably don't know about all the demons out there that will come to haunt you if you don't build a foundation that helps you actively keeping them away.

— atoav

Annotate and explore your data with datasette-comments. New plugin for Datasette and Datasette Cloud: datasette-comments, providing tools for collaborating on data exploration with a team through posting comments on individual rows of data.

Alex Garcia built this for Datasette Cloud but as with almost all of our work there it’s also available as an open source Python package.