Wednesday, 23rd October 2024

OpenAI’s monthly revenue hit $300 million in August, up 1,700 percent since the beginning of 2023, and the company expects about $3.7 billion in annual sales this year, according to financial documents reviewed by The New York Times. [...]

The company expects ChatGPT to bring in $2.7 billion in revenue this year, up from $700 million in 2023, with $1 billion coming from other businesses using its technology.

— Mike Isaac and Erin Griffith, New York Times, Sep 27th 2024

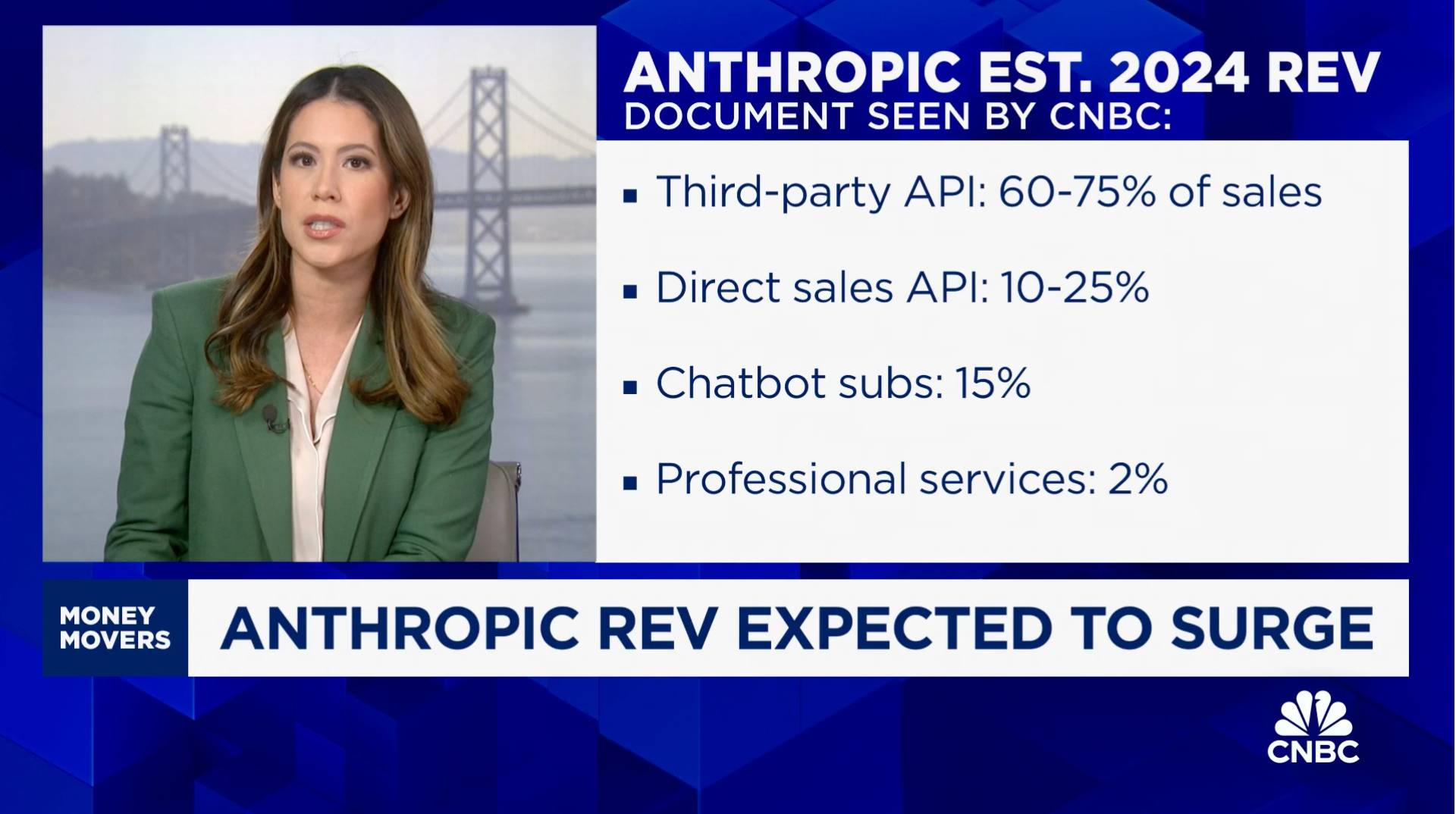

According to a document that I viewed, Anthropic is telling investors that it is expecting a billion dollars in revenue this year.

Third-party API is expected to make up the majority of sales, 60% to 75% of the total. That refers to the interfaces that allow external developers or third parties like Amazon's AWS to build and scale their own AI applications using Anthropic's models. [Simon's guess: this could mean Anthropic model access sold through AWS Bedrock and Google Vertex]

That is by far its biggest business, with direct API sales a distant second projected to bring in 10% to 25% of revenue. Chatbots, that is its subscription revenue from Claude, the chatbot, that's expected to make up 15% of sales in 2024 at $150 million.

— Deirdre Bosa, CNBC Money Movers, Sep 24th 2024

Claude Artifact Runner (via) One of my least favourite things about Claude Artifacts (notes on how I use those here) is the way it defaults to writing code in React in a way that's difficult to reuse outside of Artifacts. I start most of my prompts with "no react" so that it will kick out regular HTML and JavaScript instead, which I can then copy out into my tools.simonwillison.net GitHub Pages repository.

It looks like Cláudio Silva has solved that problem. His claude-artifact-runner repo provides a skeleton of a React app that reflects the Artifacts environment - including bundling libraries such as Shadcn UI, Tailwind CSS, Lucide icons and Recharts that are included in that environment by default.

This means you can clone the repo, run npm install && npm run dev to start a development server, then copy and paste Artifacts directly from Claude into the src/artifact-component.tsx file and have them rendered instantly.

I tried it just now and it worked perfectly. I prompted:

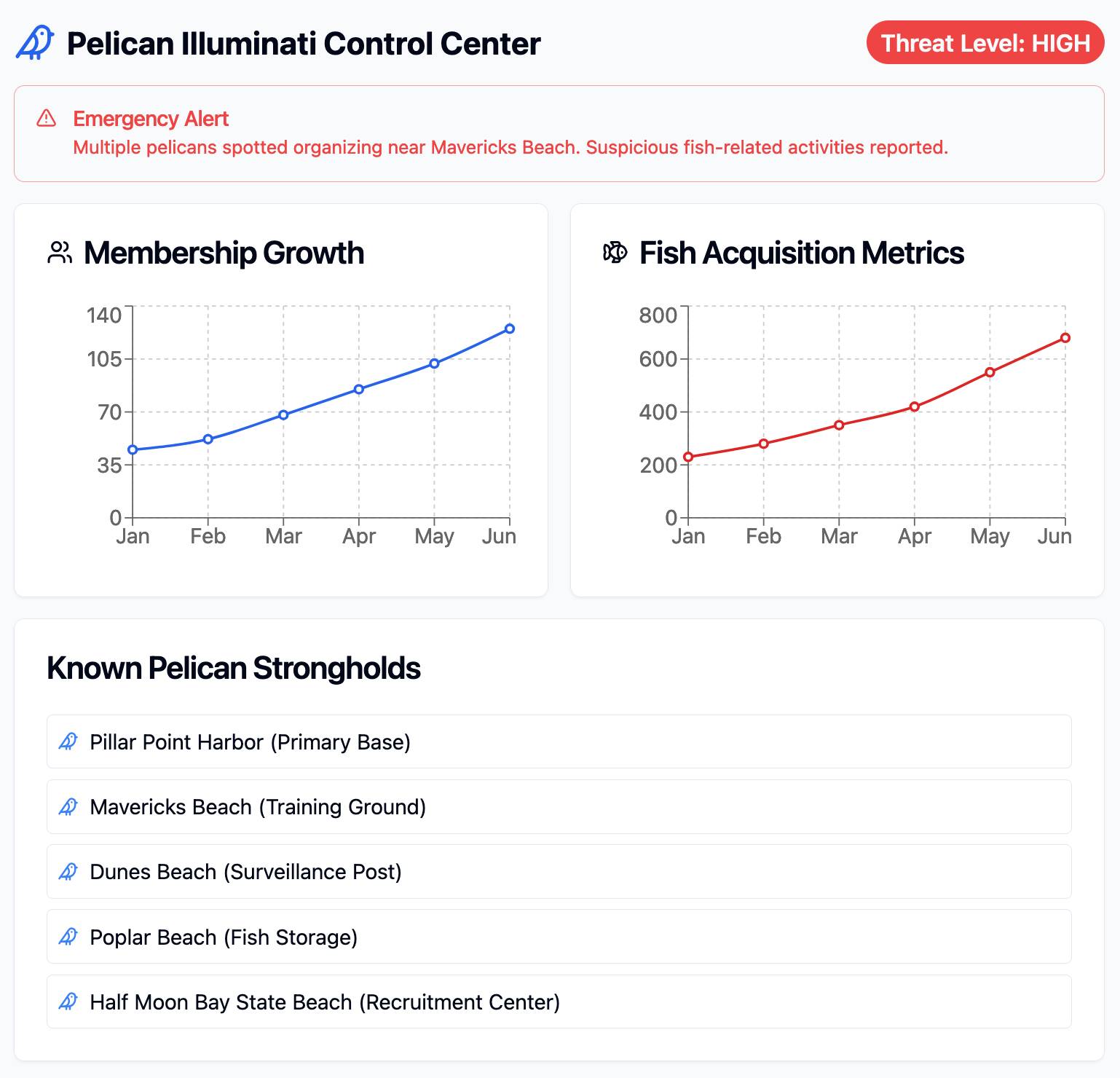

Build me a cool artifact using Shadcn UI and Recharts around the theme of a Pelican secret society trying to take over Half Moon Bay

Then copied and pasted the resulting code into that file and it rendered the exact same thing that Claude had shown me in its own environment.

I tried running npm run build to create a built version of the application but I got some frustrating TypeScript errors - and I didn't want to make any edits to the code to fix them.

After poking around with the help of Claude I found this command which correctly built the application for me:

npx vite build

This created a dist/ directory containing an index.html file and assets/index-CSlCNAVi.css (46.22KB) and assets/index-f2XuS8JF.js (542.15KB) files - a bit heavy for my liking but they did correctly run the application when hosted through a python -m http.server localhost server.

We enhanced the ability of the upgraded Claude 3.5 Sonnet and Claude 3.5 Haiku to recognize and resist prompt injection attempts. Prompt injection is an attack where a malicious user feeds instructions to a model that attempt to change its originally intended behavior. Both models are now better able to recognize adversarial prompts from a user and behave in alignment with the system prompt. We constructed internal test sets of prompt injection attacks and specifically trained on adversarial interactions.

With computer use, we recommend taking additional precautions against the risk of prompt injection, such as using a dedicated virtual machine, limiting access to sensitive data, restricting internet access to required domains, and keeping a human in the loop for sensitive tasks.

Using Rust in non-Rust servers to improve performance (via) Deep dive into different strategies for optimizing part of a web server application - in this case written in Node.js, but the same strategies should work for Python as well - by integrating with Rust in different ways.

The example app renders QR codes, initially using the pure JavaScript qrcode package. That ran at 1,464 req/sec, but switching it to calling a tiny Rust CLI wrapper around the qrcode crate using Node.js spawn() increased that to 2,572 req/sec.

This is yet another reminder to me that I need to get over my cgi-bin era bias that says that shelling out to another process during a web request is a bad idea. It turns out modern computers can quite happily spawn and terminate 2,500+ processes a second!

The article optimizes further first through a Rust library compiled to WebAssembly (2,978 req/sec) and then through a Rust function exposed to Node.js as a native library (5,490 req/sec), then finishes with a full Rust rewrite of the server that replaces Node.js entirely, running at 7,212 req/sec.

Full source code to accompany the article is available in the using-rust-in-non-rust-servers repository.

Running prompts against images and PDFs with Google Gemini.

New TIL. I've been experimenting with the Google Gemini APIs for running prompts against images and PDFs (in preparation for finally adding multi-modal support to LLM) - here are my notes on how to send images or PDF files to their API using curl and the base64 -i macOS command.

I figured out the curl incantation first and then got Claude to build me a Bash script that I can execute like this:

prompt-gemini 'extract text' example-handwriting.jpg

Playing with this is really fun. The Gemini models charge less than 1/10th of a cent per image, so it's really inexpensive to try them out.

Go to data.gov, find an interesting recent dataset, and download it. Install sklearn with bash tool write a .py file to split the data into train and test and make a classifier for it. (you may need to inspect the data and/or iterate if this goes poorly at first, but don't get discouraged!). Come up with some way to visualize the results of your classifier in the browser.

— Alex Albert, Prompting Claude Computer Use