5 posts tagged “webrtc”

2026

WebRTC is designed to degrade and drop my prompt during poor network conditions.

wtf my dude

WebRTC aggressively drops audio packets to keep latency low. If you’ve ever heard distorted audio on a conference call, that’s WebRTC baybee. The idea is that conference calls depend on rapid back-and-forth, so pausing to wait for audio is unacceptable.

…but as a user, I would much rather wait an extra 200ms for my slow/expensive prompt to be accurate. After all, I’m paying good money to boil the ocean, and a garbage prompt means a garbage response. It’s not like LLMs are particularly responsive anyway.

But I’m not allowed to wait. It’s impossible to even retransmit a WebRTC audio packet within a browser; we tried at Discord. The implementation is hard-coded for real-time latency or else.

— Luke Curley, OpenAI’s WebRTC Problem, in response to How OpenAI delivers low-latency voice AI at scale

2025

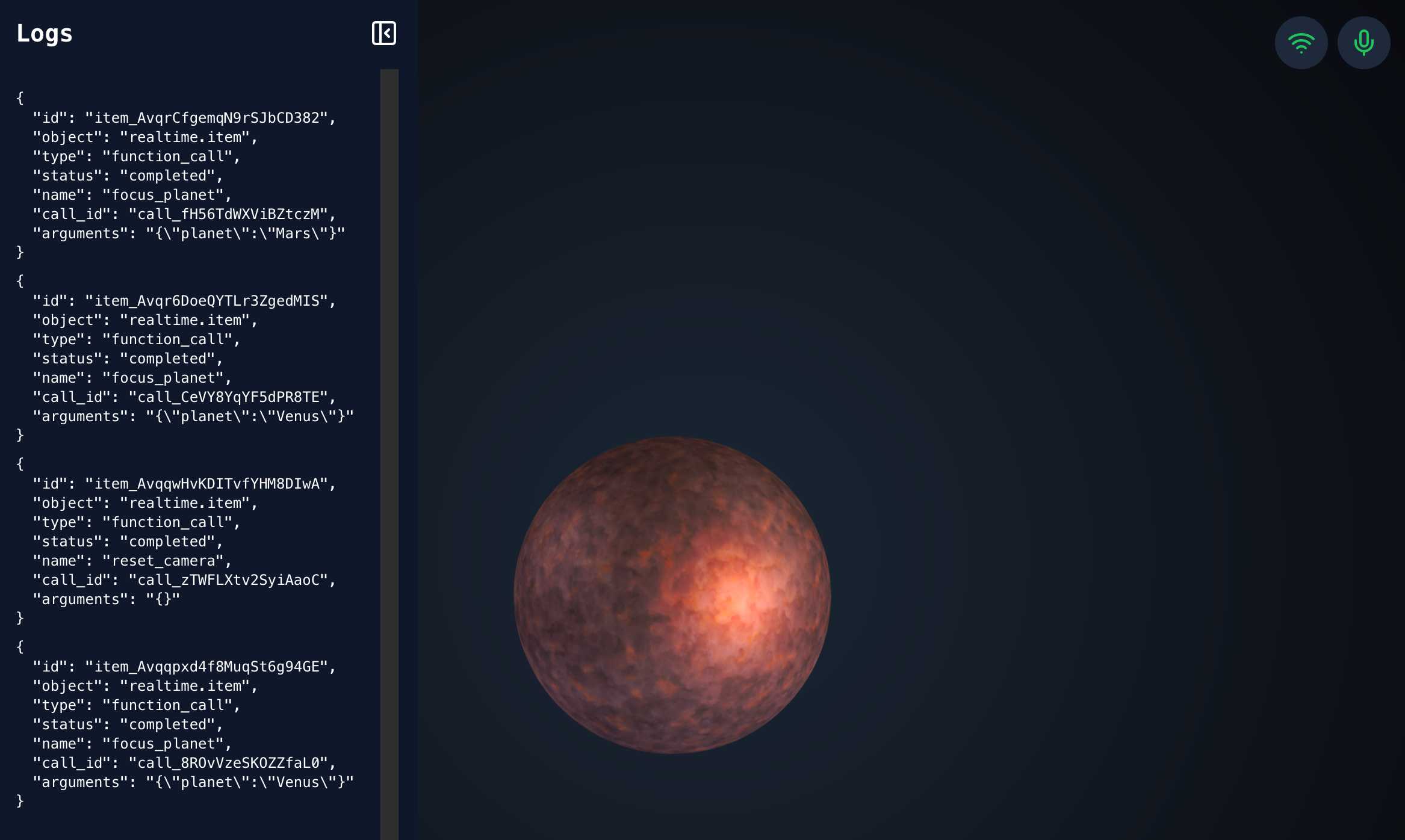

openai-realtime-solar-system. This was my favourite demo from OpenAI DevDay back in October - a voice-driven exploration of the solar system, developed by Katia Gil Guzman, where you could say things out loud like "show me Mars" and it would zoom around showing you different planetary bodies.

OpenAI finally released the code for it, now upgraded to use the new, easier to use WebRTC API they released in December.

I ran it like this, loading my OpenAI API key using llm keys get:

cd /tmp

git clone https://github.com/openai/openai-realtime-solar-system

cd openai-realtime-solar-system

npm install

OPENAI_API_KEY="$(llm keys get openai)" npm run dev

You need to click on both the Wifi icon and the microphone icon before you can instruct it with your voice. Try "Show me Mars".

2024

OpenAI WebRTC Audio demo. OpenAI announced a bunch of API features today, including a brand new WebRTC API for setting up a two-way audio conversation with their models.

They tweeted this opaque code example:

async function createRealtimeSession(inStream, outEl, token) { const pc = new RTCPeerConnection(); pc.ontrack = e => outEl.srcObject = e.streams[0]; pc.addTrack(inStream.getTracks()[0]); const offer = await pc.createOffer(); await pc.setLocalDescription(offer); const headers = { Authorization:Bearer ${token}, 'Content-Type': 'application/sdp' }; const opts = { method: 'POST', body: offer.sdp, headers }; const resp = await fetch('https://api.openai.com/v1/realtime', opts); await pc.setRemoteDescription({ type: 'answer', sdp: await resp.text() }); return pc; }

So I pasted that into Claude and had it build me this interactive demo for trying out the new API.

My demo uses an OpenAI key directly, but the most interesting aspect of the new WebRTC mechanism is its support for ephemeral tokens.

This solves a major problem with their previous realtime API: in order to connect to their endpoint you need to provide an API key, but that meant making that key visible to anyone who uses your application. The only secure way to handle this was to roll a full server-side proxy for their WebSocket API, just so you could hide your API key in your own server. cloudflare/openai-workers-relay is an example implementation of that pattern.

Ephemeral tokens solve that by letting you make a server-side call to request an ephemeral token which will only allow a connection to be initiated to their WebRTC endpoint for the next 60 seconds. The user's browser then starts the connection, which will last for up to 30 minutes.

2019

How Zoom’s web client avoids using WebRTC (via) It turns out video conferencing app Zoom uses their own WebAssembly compiled video and audio codecs and transmits H264 over WebSockets.