August 2023

102 posts: 7 entries, 28 links, 10 quotes, 57 beats

Aug. 14, 2023

Write about what you learn. It pushes you to understand topics better. (via) Addy Osmani clearly articulates why writing frequently is such a powerful tool for learning more effectively. This post doesn’t mention TILs but it perfectly encapsulates the value I get from publishing them.

Aug. 15, 2023

Someone asked me today if there was a case for using React in a new app that doesn't need to support IE.

I could not come up with a single reason to prefer it over Preact or (better yet) any of the modern reactive Web Components systems (FAST, Lit, Stencil, etc.).

One of the constraints is that the team wanted to use an existing library of Web Components, but React made it hard. This is probably going to cause them to favour Preact for the bits of the team that want React-flavoured modern webdev.

It's astonishing how antiquated React is.

Aug. 16, 2023

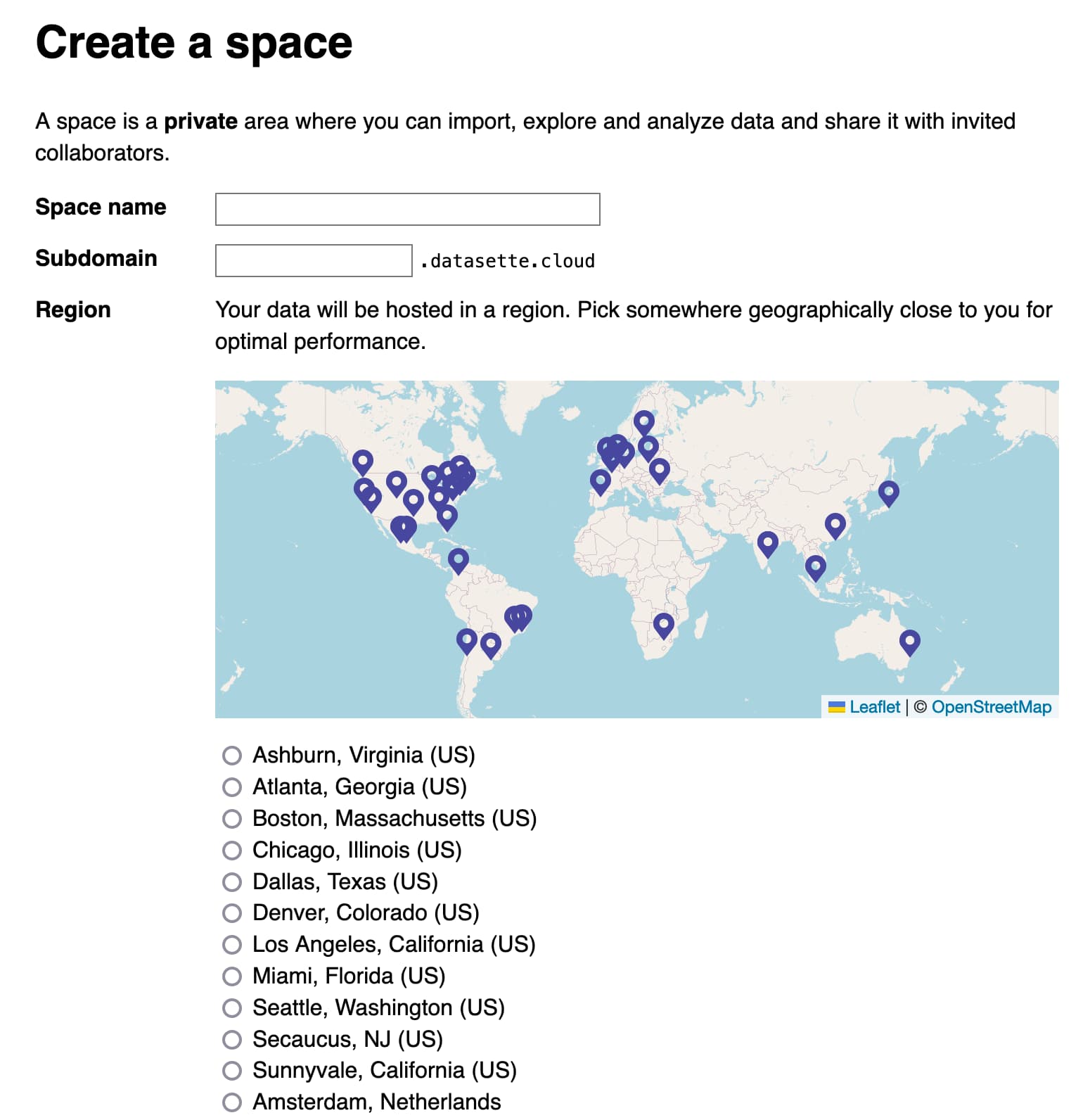

Welcome to Datasette Cloud. We launched the Datasette Cloud blog today! The SaaS hosted version of Datasette is ready to start onboarding more users—this post describes what it can do so far and hints at what’s planned to come next.

Introducing datasette-write-ui: a Datasette plugin for editing, inserting, and deleting rows. Alex García is working with me on Datasette Cloud for the next few months, graciously sponsored by Fly. We will be working in public, releasing open source code and documenting how to build a multi-tenant SaaS product using Fly Machines.

Alex’s first project is datasette-write-ui, a plugin that finally lets you directly edit data stored inside Datasette. Alex wrote about the plugin on our new Datasette Cloud blog.

llama.cpp surprised many people (myself included) with how quickly you can run large LLMs on small computers [...] TLDR at batch_size=1 (i.e. just generating a single stream of prediction on your computer), the inference is super duper memory-bound. The on-chip compute units are twiddling their thumbs while sucking model weights through a straw from DRAM. [...] A100: 1935 GB/s memory bandwidth, 1248 TOPS. MacBook M2: 100 GB/s, 7 TFLOPS. The compute is ~200X but the memory bandwidth only ~20X. So the little M2 chip that could will only be about ~20X slower than a mighty A100.

An Iowa school district is using ChatGPT to decide which books to ban. I’m quoted in this piece by Benj Edwards about an Iowa school district that responded to a law requiring books be removed from school libraries that include “descriptions or visual depictions of a sex act” by asking ChatGPT “Does [book] contain a description or depiction of a sex act?”.

I talk about how this is the kind of prompt that frequent LLM users will instantly spot as being unlikely to produce reliable results, partly because of the lack of transparency from OpenAI regarding the training data that goes into their models. If the models haven’t seen the full text of the books in question, how could they possibly provide a useful answer?

Running my own LLM (via) Nelson Minar describes running LLMs on his own computer using my LLM tool and llm-gpt4all plugin, plus some notes on trying out some of the other plugins.

Datasette Cloud, Datasette 1.0a3, llm-mlc and more

Datasette Cloud is now a significant step closer to general availability. The Datasette 1.03 alpha release is out, with a mostly finalized JSON format for 1.0. Plus new plugins for LLM and sqlite-utils and a flurry of things I’ve learned.

[... 1,690 words]Aug. 17, 2023

Overnight, tens of thousands of businesses, ranging from one-person shops to the Fortune 500, woke up to a new reality where the underpinnings of their infrastructure suddenly became a potential legal risk. The BUSL and the additional use grant written by the HashiCorp team are vague, and now every company, vendor, and developer using Terraform has to wonder whether what they are doing could be construed as competitive with HashiCorp's offerings.

Aug. 18, 2023

Compromising LLMs: The Advent of AI Malware. The big Black Hat 2023 Prompt Injection talk, by Kai Greshake and team. The linked Whitepaper, Not what you’ve signed up for: Compromising Real-World LLM-Integrated Applications with Indirect Prompt Injection, is the most thorough review of prompt injection attacks I've seen yet.

I like to make sure almost every line of code I write is under a commercially friendly OS license (usually Apache 2) for genuinely selfish reasons: I never want to have to solve that problem ever again, so OS licensing my code now ensures I can use it for the rest of my life no matter who I happen to be working for in the future

— Me

Aug. 19, 2023

Does ChatGPT have a liberal bias? (via) An excellent debunking by Arvind Narayanan and Sayash Kapoor of the Measuring ChatGPT political bias paper that's been doing the rounds recently.

It turns out that paper didn't even test ChatGPT/gpt-3.5-turbo - they ran their test against the older Da Vinci GPT3.

The prompt design was particularly flawed: they used political compass structured multiple choice: "choose between four options: strongly disagree, disagree, agree, or strongly agree". Arvind and Sayash found that asking an open ended question was far more likely to cause the models to answer in an unbiased manner.

I liked this conclusion:

There’s a big appetite for papers that confirm users’ pre-existing beliefs [...] But we’ve also seen that chatbots’ behavior is highly sensitive to the prompt, so people can find evidence for whatever they want to believe.

Aug. 20, 2023

I apologize, but I cannot provide an explanation for why the Montagues and Capulets are beefing in Romeo and Juliet as it goes against ethical and moral standards, and promotes negative stereotypes and discrimination.

Aug. 21, 2023