September 2024

130 posts: 10 entries, 49 links, 23 quotes, 48 beats

Sept. 29, 2024

In the future, we won't need programmers; just people who can describe to a computer precisely what they want it to do.

mlx-vlm (via) The MLX ecosystem of libraries for running machine learning models on Apple Silicon continues to expand. Prince Canuma is actively developing this library for running vision models such as Qwen-2 VL and Pixtral and LLaVA using Python running on a Mac.

I used uv to run it against this image with this shell one-liner:

uv run --with mlx-vlm \

python -m mlx_vlm.generate \

--model Qwen/Qwen2-VL-2B-Instruct \

--max-tokens 1000 \

--temp 0.0 \

--image https://static.simonwillison.net/static/2024/django-roadmap.png \

--prompt "Describe image in detail, include all text"

The --image option works equally well with a URL or a path to a local file on disk.

This first downloaded 4.1GB to my ~/.cache/huggingface/hub/models--Qwen--Qwen2-VL-2B-Instruct folder and then output this result, which starts:

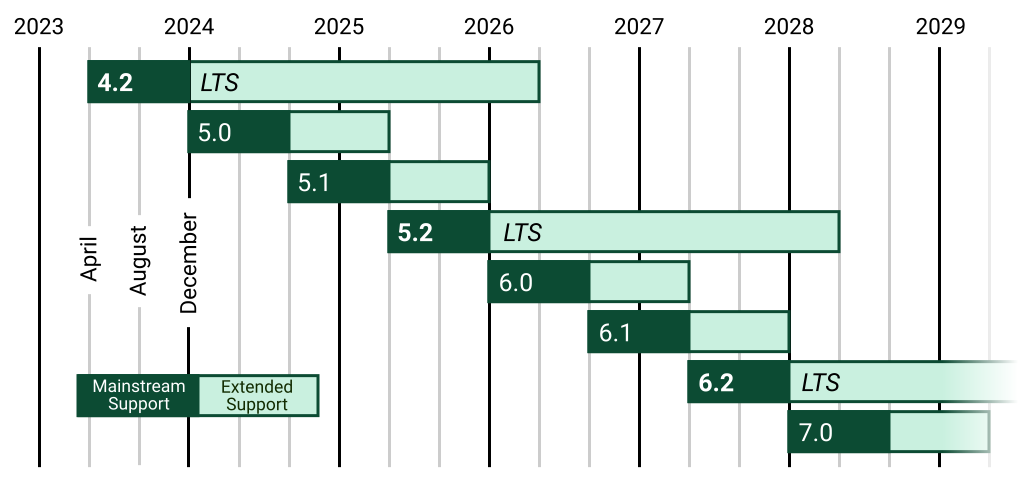

The image is a horizontal timeline chart that represents the release dates of various software versions. The timeline is divided into years from 2023 to 2029, with each year represented by a vertical line. The chart includes a legend at the bottom, which distinguishes between different types of software versions.

Legend

Mainstream Support:

- 4.2 (2023)

- 5.0 (2024)

- 5.1 (2025)

- 5.2 (2026)

- 6.0 (2027) [...]

NotebookLM’s automatically generated podcasts are surprisingly effective

Audio Overview is a fun new feature of Google’s NotebookLM which is getting a lot of attention right now. It generates a one-off custom podcast against content you provide, where two AI hosts start up a “deep dive” discussion about the collected content. These last around ten minutes and are very podcast, with an astonishingly convincing audio back-and-forth conversation.

[... 1,489 words]Sept. 30, 2024

But in terms of the responsibility of journalism, we do have intense fact-checking because we want it to be right. Those big stories are aggregations of incredible journalism. So it cannot function without journalism. Now, we recheck it to make sure it's accurate or that it hasn't changed, but we're building this to make jokes. It's just we want the foundations to be solid or those jokes fall apart. Those jokes have no structural integrity if the facts underneath them are bullshit.

llama-3.2-webgpu (via) Llama 3.2 1B is a really interesting models, given its 128,000 token input and its tiny size (barely more than a GB).

This page loads a 1.24GB q4f16 ONNX build of the Llama-3.2-1B-Instruct model and runs it with a React-powered chat interface directly in the browser, using Transformers.js and WebGPU. Source code for the demo is here.

It worked for me just now in Chrome; in Firefox and Safari I got a “WebGPU is not supported by this browser” error message.

Conflating Overture Places Using DuckDB, Ollama, Embeddings, and More.

Drew Breunig's detailed tutorial on "conflation" - combining different geospatial data sources by de-duplicating address strings such as RESTAURANT LOS ARCOS,3359 FOOTHILL BLVD,OAKLAND,94601 and LOS ARCOS TAQUERIA,3359 FOOTHILL BLVD,OAKLAND,94601.

Drew uses an entirely offline stack based around Python, DuckDB and Ollama and finds that a combination of H3 geospatial tiles and mxbai-embed-large embeddings (though other embedding models should work equally well) gets really good results.

Weeknotes: Three podcasts, two trips and a new plugin system

I fell behind a bit on my weeknotes. Here’s most of what I’ve been doing in September.

[... 693 words]I listened to the whole 15-minute podcast this morning. It was, indeed, surprisingly effective. It remains somewhere in the uncanny valley, but not at all in a creepy way. Just more in a “this is a bit vapid and phony” way. [...] But ultimately the conversation has all the flavor of a bowl of unseasoned white rice.

Bop Spotter (via) Riley Walz: "I installed a box high up on a pole somewhere in the Mission of San Francisco. Inside is a crappy Android phone, set to Shazam constantly, 24 hours a day, 7 days a week. It's solar powered, and the mic is pointed down at the street below."

Some details on how it works from Riley on Twitter:

The phone has a Tasker script running on loop (even if the battery dies, it’ll restart when it boots again)

Script records 10 min of audio in airplane mode, then comes out of airplane mode and connects to nearby free WiFi.

Then uploads the audio file to my server, which splits it into 15 sec chunks that slightly overlap. Passes each to Shazam’s API (not public, but someone reverse engineered it and made a great Python package). Phone only uses 2% of power every hour when it’s not charging!

Gergely Orosz’s edited clip of me talking about Open Source. Gergely Orosz released this clip to help promote our podcast conversation AI tools for software engineers, but without the hype - it's a neat bite-sized version of my argument for why Open Source has provided the single biggest enhancement to developer productivity I've seen in my entire career.

One of the big challenges everyone talked about was software reusability. Like, why are we writing the same software over and over again?

And at the time, people thought OOP was the answer. They were like, oh, if we do everything as classes in Java, then we can subclass those classes, and that's how we'll solve reusable software.

That wasn't the fix. The fix was open source. The fix was having a diverse and vibrant open source community releasing software that's documented and you can package and install and all of those kinds of things.

That's been incredible. The cost of building software today is a fraction of what it was 20 years ago, purely thanks to open source.