599 posts tagged “llm”

LLM is my command-line tool for running prompts against Large Language Models.

2023

hubcap.php (via) This PHP script by Dave Hulbert delights me. It’s 24 lines of code that takes a specified goal, then calls my LLM utility on a loop to request the next shell command to execute in order to reach that goal... and pipes the output straight into `exec()` after a 3s wait so the user can panic and hit Ctrl+C if it’s about to do something dangerous!

Symbex 1.4. New release of my Symbex tool for finding symbols (functions, methods and classes) in a Python codebase. Symbex can now output matching symbols in JSON, CSV or TSV in addition to plain text.

I designed this feature for compatibility with the new “llm embed-multi” command—so you can now use Symbex to find every Python function in a nested directory and then pipe them to LLM to calculate embeddings for every one of them.

I tried it on my projects directory and embedded over 13,000 functions in just a few minutes! Next step is to figure out what kind of interesting things I can do with all of those embeddings.

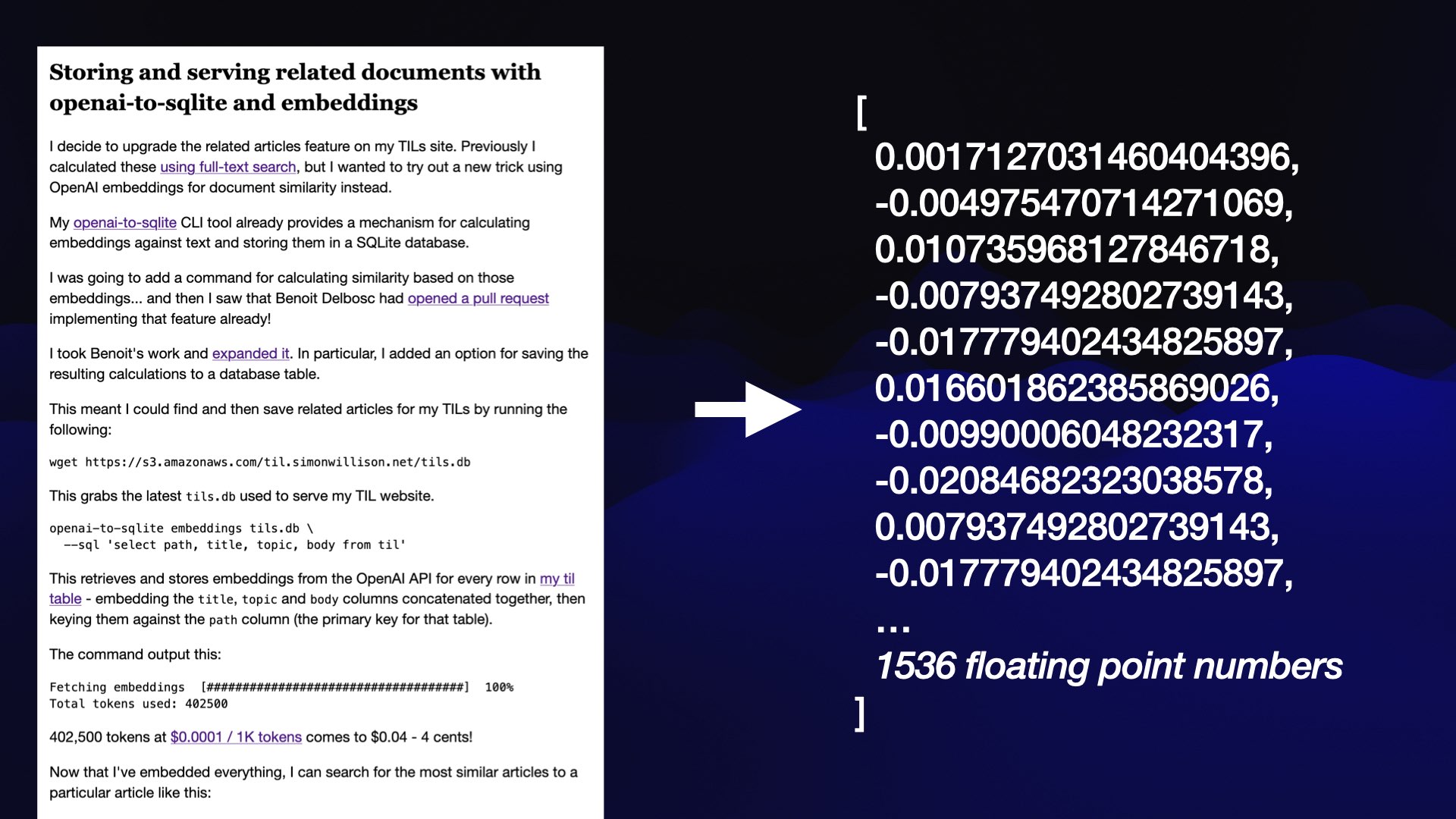

LLM now provides tools for working with embeddings

LLM is my Python library and command-line tool for working with language models. I just released LLM 0.9 with a new set of features that extend LLM to provide tools for working with embeddings.

[... 3,521 words]Datasette 1.0a4 and 1.0a5, plus weeknotes

Two new alpha releases of Datasette, plus a keynote at WordCamp, a new LLM release, two new LLM plugins and a flurry of TILs.

[... 2,709 words]Making Large Language Models work for you

I gave an invited keynote at WordCamp 2023 in National Harbor, Maryland on Friday.

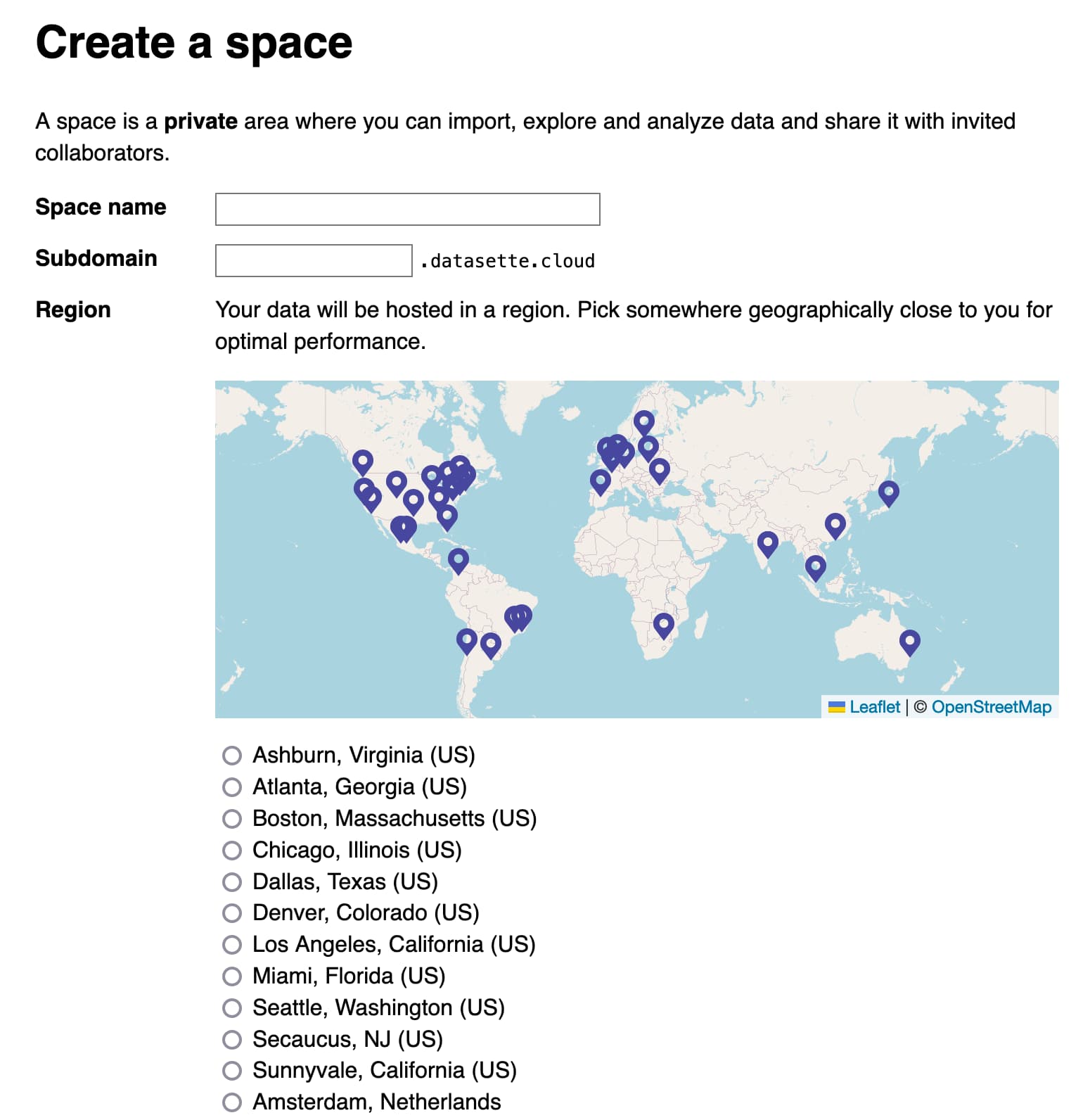

[... 14,189 words]Datasette Cloud, Datasette 1.0a3, llm-mlc and more

Datasette Cloud is now a significant step closer to general availability. The Datasette 1.03 alpha release is out, with a mostly finalized JSON format for 1.0. Plus new plugins for LLM and sqlite-utils and a flurry of things I’ve learned.

[... 1,690 words]Running my own LLM (via) Nelson Minar describes running LLMs on his own computer using my LLM tool and llm-gpt4all plugin, plus some notes on trying out some of the other plugins.

llm-mlc (via) My latest plugin for LLM adds support for models that use the MLC Python library—which is the first library I’ve managed to get to run Llama 2 with GPU acceleration on my M2 Mac laptop.